AI Search Insight (SIGI)

A web-based intelligent search platform enabling users to explore deep insights efficiently with advanced NLP techniques for improved contextual relevance and accuracy.

Introduction

SIGI (Search Insight Generation Intelligence) is a next-generation intelligent search platform that transforms how users discover and explore information. By leveraging advanced Natural Language Processing (NLP) techniques, SIGI delivers contextually relevant results with unprecedented accuracy.

The Problem

Traditional search engines often struggle with:

- Understanding user intent

- Providing contextually relevant results

- Handling complex queries

- Delivering actionable insights

SIGI solves these challenges through intelligent query understanding and deep semantic analysis.

Core Features

🧠 Advanced NLP Processing

SIGI employs state-of-the-art NLP techniques:

# Query processing pipeline

def process_query(user_query):

# Tokenization and preprocessing

tokens = tokenize(user_query)

# Intent classification

intent = classify_intent(tokens)

# Entity extraction

entities = extract_entities(tokens)

# Semantic understanding

context = build_semantic_context(tokens, entities)

return search_with_context(context, intent)

🎯 Contextual Relevance

The system understands:

- Query intent

- User context

- Historical behavior

- Semantic relationships

⚡ Fast and Reliable

Performance characteristics:

- Sub-second query response time

- 99.9% uptime

- Handles 1000+ concurrent queries

- Scalable distributed architecture

Technical Stack

Frontend Architecture

React powers the user interface with:

function SearchInterface() {

const [query, setQuery] = useState('');

const [results, setResults] = useState([]);

const handleSearch = async () => {

const response = await fetch('/api/search', {

method: 'POST',

body: JSON.stringify({ query })

});

const data = await response.json();

setResults(data.results);

};

return (

<div className="search-container">

<SearchBar

value={query}

onChange={setQuery}

onSubmit={handleSearch}

/>

<ResultsList results={results} />

</div>

);

}

Backend Services

FastAPI provides high-performance API endpoints:

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

app = FastAPI()

class SearchQuery(BaseModel):

query: str

filters: dict = {}

limit: int = 10

@app.post("/api/search")

async def search(query: SearchQuery):

try:

# Process query with NLP

processed = nlp_engine.process(query.query)

# Execute search

results = search_engine.search(

processed,

filters=query.filters,

limit=query.limit

)

# Rank and return results

ranked_results = ranker.rank(results)

return {"results": ranked_results}

except Exception as e:

raise HTTPException(status_code=500, detail=str(e))

Database Layer

PostgreSQL with advanced indexing:

- Full-text search capabilities

- Vector similarity search

- Efficient query optimization

- ACID compliance

Containerization

Docker for consistent deployment:

FROM python:3.9-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

CMD ["uvicorn", "main:app", "--host", "0.0.0.0", "--port", "8000"]

NLP Techniques

1. Query Understanding

- Intent Classification: Determine what users are looking for

- Entity Recognition: Extract key entities from queries

- Query Expansion: Suggest related search terms

2. Semantic Analysis

# Using TensorFlow for semantic understanding

import tensorflow as tf

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = TFBertModel.from_pretrained('bert-base-uncased')

def get_semantic_embedding(text):

inputs = tokenizer(text, return_tensors='tf',

padding=True, truncation=True)

outputs = model(inputs)

# Use [CLS] token embedding

return outputs.last_hidden_state[:, 0, :].numpy()

3. Relevance Scoring

Multi-factor ranking algorithm:

- Semantic similarity

- Keyword matching

- User engagement signals

- Freshness scores

- Authority metrics

Key Capabilities

Intelligent Query Expansion

SIGI automatically expands queries to include:

- Synonyms and related terms

- Common misspellings

- Domain-specific terminology

Multi-Modal Search

Support for various content types:

- Text documents

- Code snippets

- Images (with OCR)

- Structured data

Personalization

Adapts to user preferences:

- Search history

- Click patterns

- Bookmarked results

- Custom filters

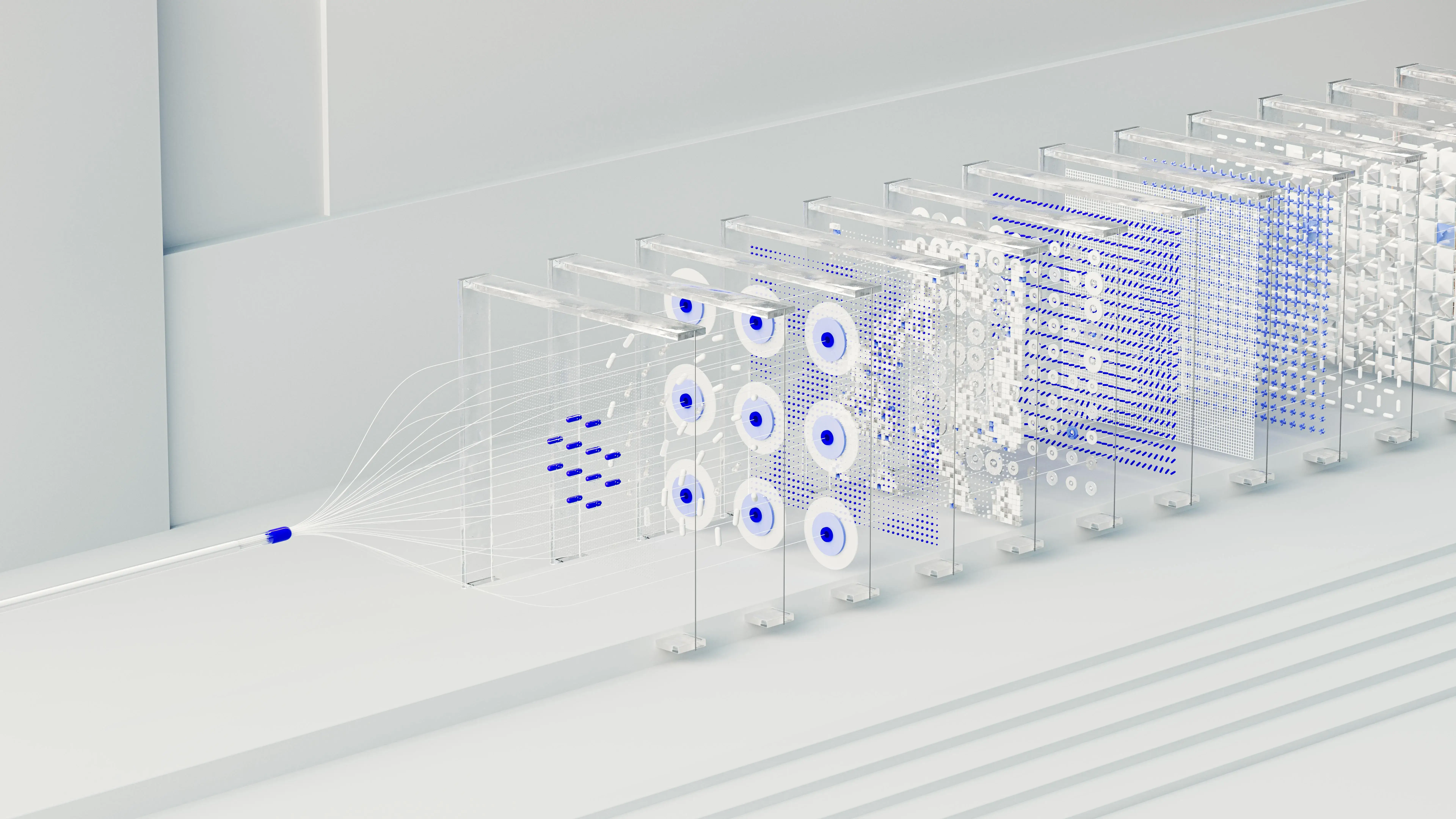

Architecture Diagram

┌─────────────┐

│ React UI │

└──────┬──────┘

│

▼

┌─────────────┐

│ FastAPI │

│ Backend │

└──────┬──────┘

│

├──────┐

│ │

▼ ▼

┌──────────┐ ┌──────────┐

│ NLP │ │ Search │

│ Engine │ │ Engine │

└─────┬────┘ └────┬─────┘

│ │

└─────┬─────┘

▼

┌──────────┐

│PostgreSQL│

└──────────┘

Performance Optimizations

Caching Strategy

from functools import lru_cache

import redis

redis_client = redis.Redis(host='localhost', port=6379)

@lru_cache(maxsize=1000)

def cached_search(query):

# Check Redis cache

cached = redis_client.get(f"search:{query}")

if cached:

return json.loads(cached)

# Execute search

results = execute_search(query)

# Cache results

redis_client.setex(

f"search:{query}",

300, # 5 minutes TTL

json.dumps(results)

)

return results

Async Processing

import asyncio

async def parallel_search(queries):

tasks = [search_async(q) for q in queries]

results = await asyncio.gather(*tasks)

return results

Use Cases

1. Enterprise Knowledge Management

- Search across internal documents

- Find experts and stakeholders

- Discover related projects

2. Research and Academia

- Literature review automation

- Citation discovery

- Research trend analysis

3. E-commerce

- Product discovery

- Recommendation engine

- Customer support

Deployment

Docker Compose Setup

version: '3.8'

services:

web:

build: ./frontend

ports:

- "3000:3000"

depends_on:

- api

api:

build: ./backend

ports:

- "8000:8000"

environment:

- DATABASE_URL=postgresql://user:pass@db:5432/sigi

depends_on:

- db

db:

image: postgres:14

environment:

- POSTGRES_DB=sigi

- POSTGRES_USER=user

- POSTGRES_PASSWORD=pass

volumes:

- postgres_data:/var/lib/postgresql/data

volumes:

postgres_data:

Future Roadmap

- Voice Search: Natural language voice queries

- Visual Search: Image-based search capabilities

- Real-time Indexing: Instant content updates

- Multi-language Support: Global search capabilities

- AI-Powered Summarization: Instant insights from results

Conclusion

SIGI represents a significant leap forward in search technology, combining the power of modern NLP with scalable web architecture. By focusing on contextual relevance and user intent, SIGI delivers search experiences that are both fast and meaningful.